linux上单机haoop配备笔记

日期:2014-05-16 浏览次数:21238 次

linux上单机haoop配置笔记

先说一下我的环境

Win7

Visualbox4.2.10

ubuntu-12.04.2-desktop-i386.iso

hadoop0.20.2

jdk1.6.10

我的配置文件

Hosts

Profile

hadoop-env.sh

hdfs-site.xml

core-site.xml

mapred-site.xml

Masters

slavers

遇到问题之一:Retrying connect to server

原因:

1.hadoop没有启动起来,可用jps看一下是否有相关的进程。

2.看一下core-site.xml中 的fs.default.nam的值是否为hdfs://localhost:9000

在hadoop安装目录下创建4个文件夹:

data1,data2,datalog1,datalog2

有时通过jps查看,发现找不到namenode进程,那么可以用bin/stop-all.sh关闭一下,然后格式化,之后再启动hadoop:

先说一下我的环境

Win7

Visualbox4.2.10

ubuntu-12.04.2-desktop-i386.iso

hadoop0.20.2

jdk1.6.10

我的配置文件

Hosts

10.13.19.55 master

Profile

export HADOOP_HOME=/usr/local/hadoop export JAVA_HOME=/usr/local/java export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar:$HADOOP_HOME:$HADOOP_HOME/lib export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:

hadoop-env.sh

# The java implementation to use. Required. export JAVA_HOME=/usr/local/java

hdfs-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.name.dir</name>

<value>/usr/local/hadoop/datalog1,/usr/local/hadoop/datalog2</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>/usr/local/hadoop/data1,/usr/local/hadoop/data2</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>core-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop/tmp</value>

</property>

</configuration>mapred-site.xml

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!-- Put site-specific property overrides in this file. --> <property> <name>mapred.job.tracker</name> <value>master:9001</value> </property> </configuration>

Masters

master

slavers

master

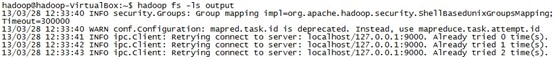

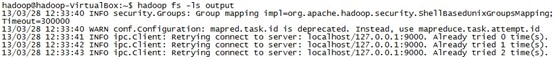

遇到问题之一:Retrying connect to server

13/03/28 12:33:41 INFO ipc.Client: Retrying connect to server: localhost/127.0.0.1:9000. Already tried 0 time(s).

原因:

1.hadoop没有启动起来,可用jps看一下是否有相关的进程。

2.看一下core-site.xml中 的fs.default.nam的值是否为hdfs://localhost:9000

在hadoop安装目录下创建4个文件夹:

data1,data2,datalog1,datalog2

有时通过jps查看,发现找不到namenode进程,那么可以用bin/stop-all.sh关闭一下,然后格式化,之后再启动hadoop:

hadoop@hadoop-VirtualBox:/usr/local/hadoop$ bin/hadoop namenode -format

13/03/28 14:08:10 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = hadoop-VirtualBox/127.0.1.1

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 0.20.2

STARTUP_MSG: build = http://cdpsecurecdp.svn.apache.org/repos/asf/hadoop/common/branches/branch-0.20 -r 911707; compiled by 'chrisdo' on Fri Feb 19 08:07:34 UTC 2010

************************************************************/

Re-format filesystem in /usr/local/hadoop/datalog1 ? (Y or N) Y

Re-format filesystem in /usr/local/hadoop/datalog2 ? (Y or N) Y

13/03/28 14:08:20 INFO namenode.FSNamesystem: fsOwner=hadoop,hadoop,adm,cdrom,sudo,dip,plugdev,lpadmin,sambashare

13/03/28 14:08:20 INFO namenode.FSNamesystem: supergroup=super

免责声明: 本文仅代表作者个人观点,与爱易网无关。其原创性以及文中陈述文字和内容未经本站证实,对本文以及其中全部或者部分内容、文字的真实性、完整性、及时性本站不作任何保证或承诺,请读者仅作参考,并请自行核实相关内容。